So in the first part we learnt what Docker was and what Docker can do. Now we need to learn how to be able to manage the containers so that we have control over them and so we have an easy way to deploy them into different environments.

This is all done via the concept of Images, and commits like how you manage your source control with Git. Once you’ve added the required changes to your application, be it that you needed to update the ubuntu VM with the text editor that you like to use when you’re trawling through the logs, or have to deploy a new piece of application.

To do this:1

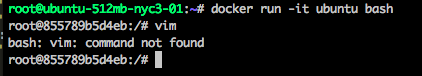

docker run -it ubuntu bash

When in the containter’ed ubuntu can see that vim isn’t installed, so a simple apt-get fixes this. So next time you wont want to have to do that again.

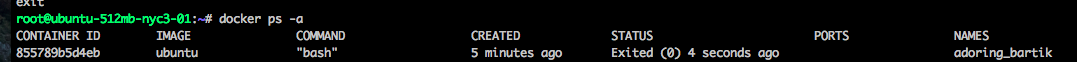

Exit it out of the updated ubuntu container

1

docker ps -a

find the container id run the following command1

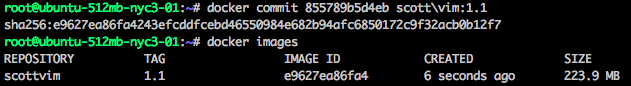

docker commit <container id> <name/thechange(addedvim):<version>

1 | docker images |

With docker images you can now see your new image to run next time, to run your newly updated ubuntu image the command is:1

docker run -it scottvim:1.1 bash

With how simple it is to manage and update its little wonder why this is taking off as the continuous integration platform.

Docker also has the functionality of making this into text files called Dockerfiles so you can submit these into source repositories and see commited change history. Also saves time from having to spin up a new container to make changes.

With Dockerfiles you need to ensure you combine the commands as much as possible as each executed command makes a new commit of that image. The example of how to combine more than one command.

1 | FROM ubuntu |

This will update the ubuntu container as well as install vim and nginx as one commit, allowing less commits and wasted time.

Now you’ve got the Dockerfile it once again needs to be built, this is done lock so.

1 | docker build -t scott/<imagename>:<tag>(normally version number) <context path>(web project) |

the context path is the location in which Docker will collect files from if you’ve linked source files that are required for the applications to run.

Then you can just start it like you had been before.

1 | docker run <nameofimage>:<tag> |

Easy as that.

So been playing around with creating lots of containers whilst making all the images to remove all the containers that we don’t need any more the command is

1 | docker rm |

For all the images its

1 | docker rmi |